Spring 2021

Modern conventional hard drives export a linear address space. In other words, they present the OS with the illusion of an array of n logical block addresses (LBAs), numbered from 0 to n-1, that the OS can access by block number. The OS must read/write at the granularity of blocks.

Most modern hard drives are “advanced format” HDDs, which means that their sector size exceeds the older standard of 512 bytes. A common physical block size is 4 KiB (4096 bytes). DRAM pages are commonly 4096 bytes as well; this is not a coincidence! There are clear benefits to aligning the caching granularity with device block size.

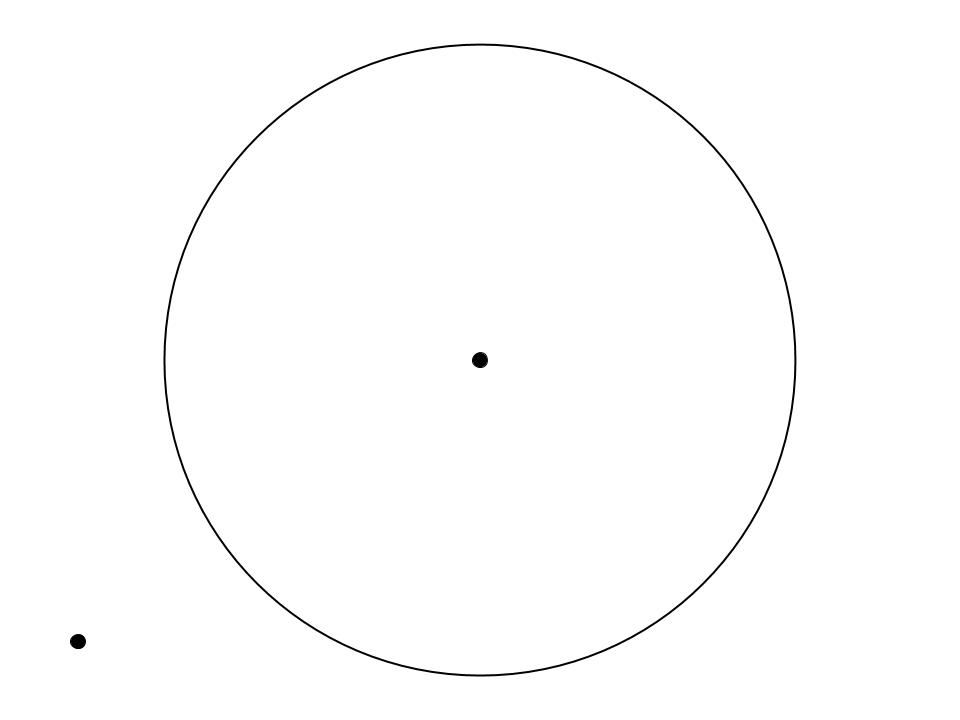

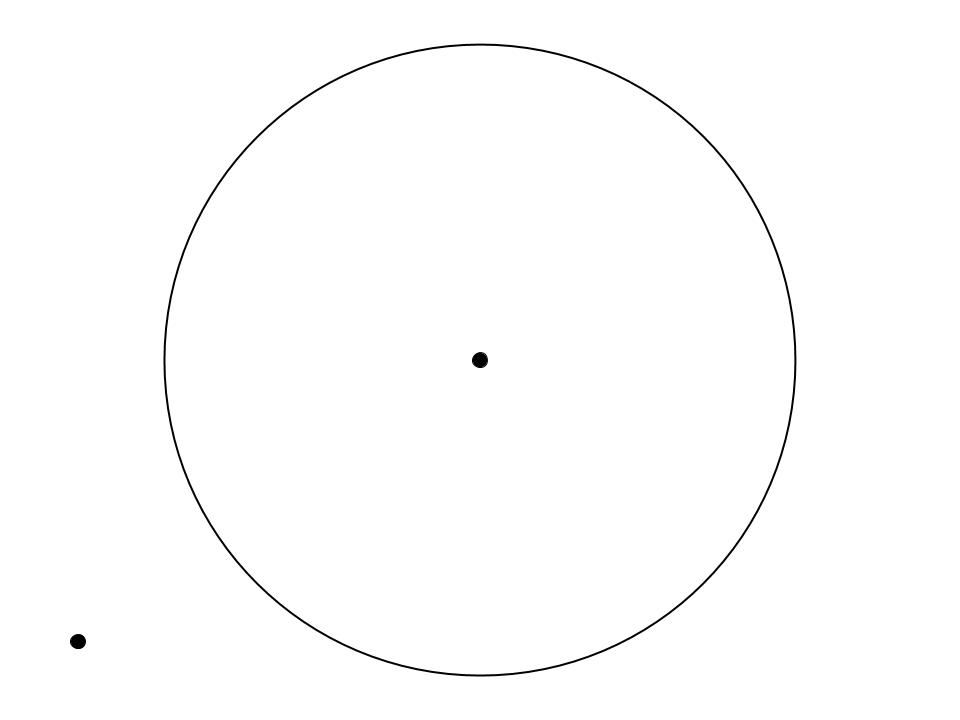

Disks are essentially a collection of spinning metal surfaces, and as they spin, an arm moves from the center to the outer edge to read and write data.

Looking at your picture above, does each sector occupy the same area on the platter? Since the platter spins as a unit, how fast does a point located on the outside of the platter move relative to a point located on the inside of the platter?

To read or write data from the platter, the disk arm must pass over the target sector. There are two parts to any I/O: * Setup * Transfer

We can think of the setup as all the work necessary to locate the disk arm at the appropriate physical location, and the transfer as all of the work that takes place starting from the moment that the reading/writing of the first byte begins.

Disk geometry affects the costs of I/Os in interesting ways.

HDDs have their own internal memory buffers (disk buffers are O(10s of MiBs))

If the OS and disk were to attempt to immediately satisfy every I/O request, and to strictly complete requests in the order that they were received (i.e. FIFO request scheduling), performance would likely be poor. Why? Multiple applications may simultaneously request access to the disk, and they must all share a single disk head when reading/writing data. Further, no single application’s requests will exhibit perfect locality (metadata is often separated from the data it describes, so even sequential data requests may require seeking: the file system must first read the metadata required in order to find the location of the target data). Given these challenges, we need more sophisticated strategies to order requests; we want to avoid as many unnecessary disk seeks as possible. A good scheduling algorithm should strive to:

The textbook describes the principle of shortest job first (SJF). As the scheduler receives a series of requests, a queue may build. The queue can be (re)ordered in a variety of ways.

The anticipatory scheduler is alluded to in the text: an anticipatory scheduler will pause for a short time before dispatching an I/O from the queue. If a new I/O arrives, the scheduler has more information to help it optimize its I/O ordering.

More complex scheduling algorithms may prioritize certain applications over others.